The Department of Defense (DoD) has migrated from a platform-based acquisition strategy to one focused on delivering capabilities. Instead of delivering a fighter aircraft or an unmanned air vehicle, contractors are now being asked to deliver the right collection of hardware and software to meet specific wartime challenges. This means that much of the burden associated with conceptualizing, architecting, integrating, implementing, and deploying complex capabilities into the field has shifted from desks in the Pentagon to desks at Lockheed Martin, Boeing, Rockwell, and other large aerospace and defense contractors.

In “The Army’s Future Combat Systems’ [FCS] Features, Risks and Alternatives,” the Government Account-ing Office states the challenge as:

…14 major weapons systems or platforms have to be designed and integrated simultaneously and within strict size and weight limitations in less time than is typically taken to develop, demonstrate, and field a single system. At least 53 technologies that are considered critical to achieving critical performance capabilities will need to be matured and integrated into the system of systems. And the development, demonstration, and production of as many as 157 complementary systems will need to be synchronized with FCS content and schedule. [1]

The planning, management, and execution of such projects will require changes in the way organizations do business. This article reports on ongoing research into the cost challenges associated with planning and executing a system of systems (SOS) project. Because of the relatively immature nature of this acquisition strategy, there is not nearly enough hard data to establish statistically significant cost-estimating relationships. The conclusions drawn to date are based on what we know about the cost of system engineering and project management activities in more traditional component system projects augmented with research on the added factors that drive complexities at the SOS level.

The article begins with a discussion of what an SOS is and how projects that deliver SOS differ from those projects delivering stand-alone systems. Following this is a discussion of the new and expanded roles and activities associated with SOS that highlight increased involvement of system engineering resources. The focus then shifts to cost drivers for delivering the SOS capability that ties together and optimizes contributions from the many component systems. The article concludes with some guidelines for using these cost drivers to perform top-level analysis and trade-offs focused on delivering the most affordable solution that will satisfy mission needs.

Related Research

Extensive research has been conducted on many aspects of SOS by the DoD, academic institutions, and industry. Earlier research focused mainly on requirements, architecture, test and evaluation, and project management [2, 3, 4, 5, 6, 7, 8]. As time goes on and the industry gets a better handle on the technological and management complexities of SOS delivery, the research expands from a focus on the right way to solve the problem to a focus on the right way to solve the problem affordably. In the forefront of this cost-focused research is the University of Southern California’s Center for Software Engineering [9], the Defense Acquisition University [10], Carnegie Mellon’s Software Engineering Institute [11], and Cranfield University [12].

What Is an SOS?

An SOS is a configuration of component systems that are independently useful but synergistically superior when acting in concert. In other words, it represents a collection of systems whose capabilities, when acting together, are greater than the sum of the capabilities of each system acting alone.

According to Mair [13], an SOS must have most, if not all, of the following characteristics:

Operational independence of component systems.

Managerial independence of component systems.

Geographical distribution.

Emergent behavior.

Evolutionary development processes.

For the purposes of this research, this definition has been expanded to explicitly state that there be a network-centric focus that enables these systems to communicate effectively and efficiently.

Today, there are many platforms deployed throughout the battlefield with limited means of communication. This becomes increasingly problematic as multiple services are deployed on a single mission as there is no consistent means for the Army to communicate with the Navy or the Navy to communicate with the Air Force. Inconsistent and unpredictable means of communication across the battlefield often results in unacceptable time from detection of a threat to engagement. This can ultimately endanger the lives of our service men and women.

How Different Are SOS Projects?

How much different is a project intended to deliver an SOS capability from a project that delivers an individual platform such as an aircraft or a submarine? Each case presents a set of customer requirements that need to be elicited, understood, and maintained. Based on these requirements, a solution is crafted, implemented, integrated, tested, verified, deployed, and maintained. At this level, the two projects are similar in many ways. Dig a little deeper and differences begin to emerge. The differences fall into several categories: acquisition strategy, software, hardware, and overall complexity.

The SOS acquisition strategy is capability-based rather than platform-based. For example, the customer presents a contractor with a set of capabilities to satisfy particular battlefield requirements. The contractor then needs to determine the right mix of platforms, the sources of those platforms, where existing technology is adequate, and where invention is required. Once those questions are answered, the contractor must decide how best to integrate all the pieces to satisfy the initial requirements. This capability-based strategy leads to a project with many diverse stakeholders. Besides the contractor selected as the lead system integrator (LSI), other stakeholders that may be involved include representatives from multiple services, Defense Advanced Research Projects Agency, prime contractor(s) responsible for supplying component systems as well as their subcontractors. Each of these stakeholders brings to the table different motivations, priorities, values, and business practices – each brings new people management issues to the project.

Software is an important part of most projects delivered to DoD customers. In addition to satisfying the requirements necessary to function independently, each of the component systems needs to support the interoperability required to function as a part of the entire SOS solution. Much of this interoperability will be supplied through the software resident in the component systems. This requirement for interoperability dictates that well-specified and applied communication protocols are a key success factor when deploying an SOS. Standards are crucial, especially for the software interfaces. Additionally, because of the need to deliver large amounts of capability in shorter and shorter timeframes, the importance of commercial off-the-shelf (COTS) software in SOS projects continues to grow.

With platform-based acquisitions, the customer generally has a fairly complete understanding of the requirements early on in the project with a limited amount of requirements growth once the project commences. Because of the large scale and long-term nature of capability-based acquisitions, the requirements tend to emerge over time with changes in governments, policies, and world situations. Because requirements are emergent, planning and execution of both hardware and software contributions to the SOS project are impacted.

SOS projects are also affected by the fact that the hardware components being used are of varying ages and technologies. In some cases, an existing hardware platform is being modified or upgraded to meet increased needs of operating in an SOS environment, while in other instances brand new equipment with state-of-the-art technologies is being developed. SOS project teams need to deal with components that span the spectrum from the high-tech, but relatively untested to the low-tech, tried-and-true technologies and equipment.

Basically, a project to deliver an SOS capability is similar in nature to a project intended to deliver a specific platform except that overall project complexity may be increased substantially. These complexities grow from capability-based acquisition strategies, increased number of stakeholders, increased overall cost (and the corresponding increased political pressure), emergent requirements, interoperability, and equipment in all stages from infancy to near retirement.

New and Expanded Roles and Activities

Understanding the manifestation of these increased complexities on a project is the first step to determining how the planning and control of an SOS project differs from that of a project that delivers one of the component systems. One of the biggest and most obvious differences in the project team is the existence of an LSI. The LSI is the contractor tasked with the delivery of the SOS that will deliver the capabilities the DoD customer is looking for. The LSI can be thought of as the super prime or the prime of prime contractors. He or she is responsible for managing all the other primes and contractors and ultimately for fielding the required capabilities. The main areas of focus for the LSI include:

Requirements analysis for the SOS.

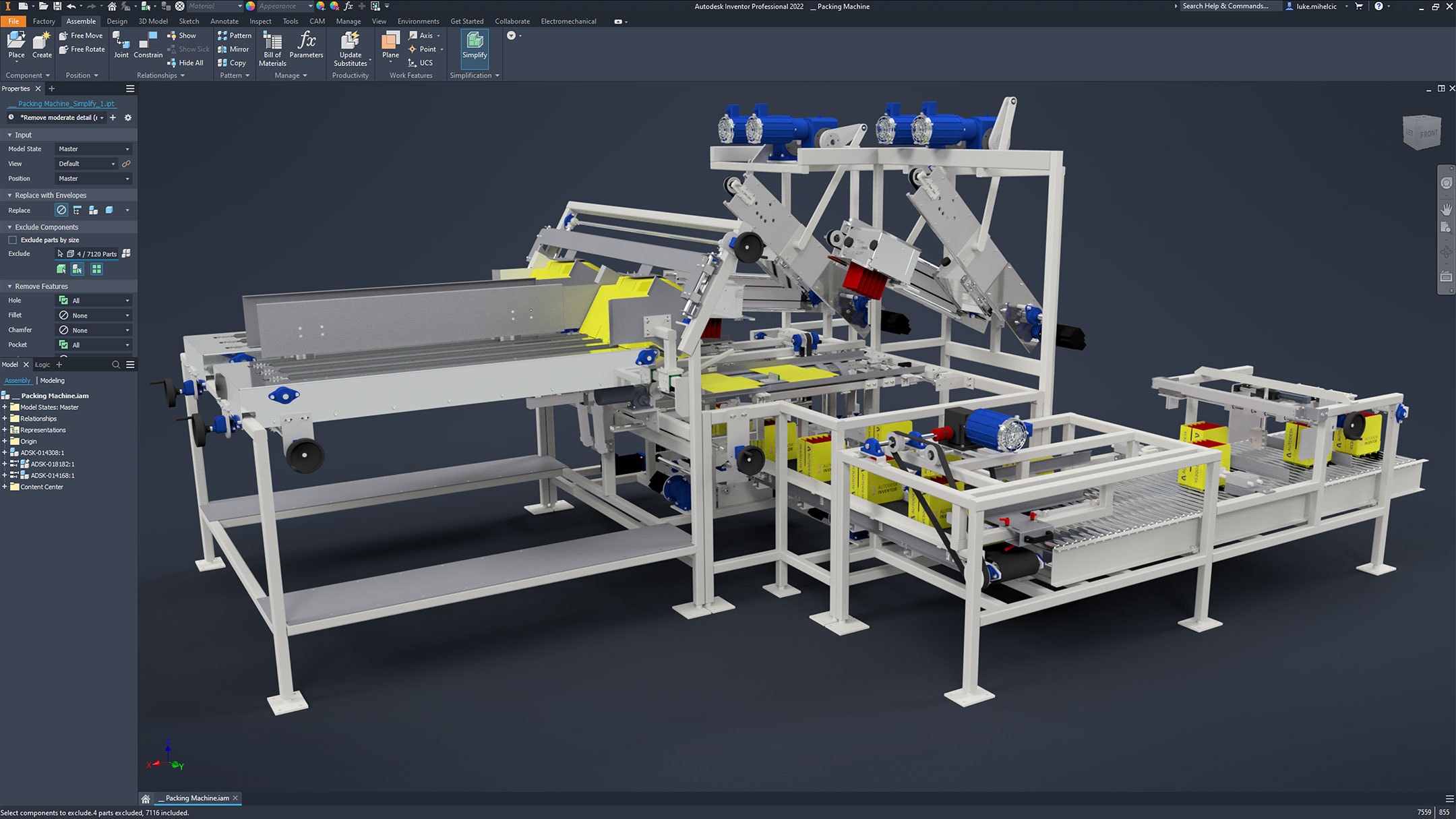

Design of SOS architecture.

Evaluation, selection, and acquisition of component systems.

Integration and test of the SOS.

Modeling and simulation.

Risk analysis, avoidance, and mitigation.

Overall program management for the SOS.

One of the primary jobs of the LSI is completing the system engineering tasks at the SOS level.

Focus on System Engineering

The following is according to the “Encyclopedia Britannica”:

“… system engineering is a technique of using knowledge from various branches of engineering and science to introduce technological innovations into the planning and development stages of systems. Systems engineering is not as much a branch of engineering as it is a technique for applying knowledge from other branches of engineering and disciplines of science in an effective combination. [14]

System engineering as a discipline first emerged during World War II as technology improvements collided with the need for more complex systems on the battlefield. As systems grew in complexity, it became apparent that it was necessary for there to be an engineering presence well versed in many engineering and science disciplines to lend an understanding of the entire problem a system needed to solve. To quote Admiral Grace Hopper, “Life was simple before World War II. After that, we had systems [15].”

With this top-level view, the system engineers were able to grasp how best to optimize emerging technologies to address the specific complexities of a problem. Where an electrical engineer would concoct a solution focused on the latest electronic devices and a software engineer would develop the best software solution, the system engineer knows enough about both disciplines to craft a solution that gets the best overall value from technology. Additionally, the system engineer has the proper understanding of the entire system to perform validation and verification upon completion, ensuring that all component pieces work together as required.

Today, a new level of complexity has been added with the emerging need for SOS, and once again the diverse expertise of the system engineers is required to overcome this complexity. System engineers need to comprehend the big picture problem(s) whose solution is to be provided by the SOS. They need to break these requirements down into the hardware platforms and software pieces that best deliver the desired capability, and they need to have proper insight into the development, production, and deployment of the systems to ensure not only that they will meet their independent requirements, but also that they will be designed and implemented to properly satisfy the interoperability and interface requirements of the SOS. It is the task of the system engineers to verify and validate that the component systems, when acting in concert with other component systems, do indeed deliver the necessary capabilities.